LLMs can "hallucinate," producing convincing but false information. This is a significant issue for companies that require effective AI. Retrieval-Augmented Generation (RAG) is one such solution. Based on using external data, RAG brings mission and facts on board by training and promoting accuracy and halting illusions in LLMs. One of the key aspects of efficiently using AI is understanding the concept of RAG.

What Are LLM Hallucinations?

The hallucinations of the ensuing LLM describe a case where an AI model produces information based on facts that are false, irrelevant, or not related to reality and represents it as accurate. Such fabrications are not drawn up by design; these are the side effects of training LLCs. The models are structured to forecast the most likely subsequent word in a sequence, depending on Big data of text and encoding. The predictive process sometimes leads them to come up with plausible-sounding but purely fictional questions and facts.

Why Do Hallucinations Happen?

LLM hallucinations are a result of several factors:

Outdated Training Data

LLMs are trained on a static snapshot of the internet. They are deficient in real-time information, and therefore, when asked about current events, they might attempt to fill in the gaps present in the current times with the sharp information of the past or fabricated information.

Knowledge Gaps

The training data is very huge, but it is not comprehensive. When a model is asked about a niche or a vague subject, it may lack sufficient information related to the topic. It will strive to find an answer according to similar and inadequate tendencies.

Ambiguous Prompts

Vague or poorly worded questions can confuse an LLM, causing it to make incorrect assumptions and generate off-target responses. The model aims to scan the user's intent as accurately as possible, but ambiguity may lead it in the wrong direction.

Over-Creativity

The processes of enabling LLMs' creativity and of generating new text also result in fabrications. The model is constructed in a way that serves as a creative pattern matcher, rather than a factual database.

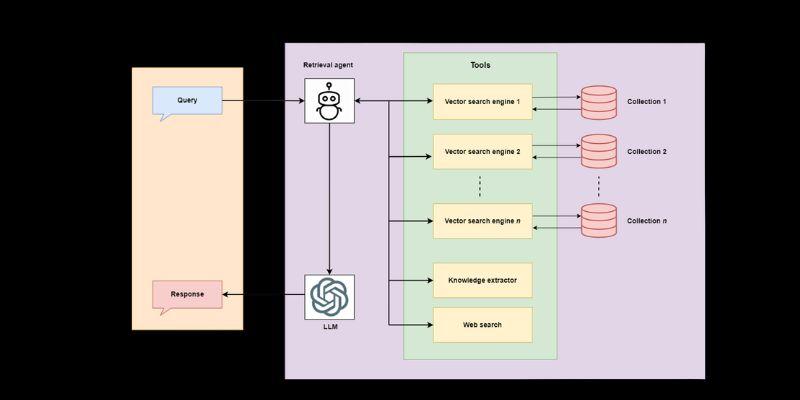

How RAG Reduces LLM Hallucinations

An additional example is Retrieval-Augmented Generation (RAG), an architectural strategy that supplements the capabilities of LLMs with references to external bases of knowledge. A RAG-powered system gets the concerned information only after it retrieves it first through a credible source, instead of applying only its internal, already established knowledge to come up with a response.

The planting of the process provides a factual safety net whereby the output of the model is based on verifiable data, and there is a high chance of eliminating the occurrence of hallucinations.

The RAG Workflow Explained

It is possible to divide the RAG process into three primary steps that include:

Retrieval

When a user submits a query, the RAG system doesn't immediately send it to the LLM. It initially identifies a body of external knowledge, typically an internal company document, a product guide, a specialized database, or an edited collection of articles, that contains information relevant to a query. This is commonly achieved at the cost of numbering the documents and queries (embeddings) and searching for the nearest similarities.

Augmentation

The relevant information retrieved from the knowledge base is then combined with the original user prompt. This augmented prompt provides the LLM with specific context and factual data it needs to formulate an accurate answer. For example, the prompt becomes something like: "Using the following information: [retrieved text], answer this question: [user's original question]."

Generation

Lastly, the augmented prompt is input to the LLM. Having such a complete, factual context, the model itself can come up with a response that is directly informed by the presented data as opposed to basing that response upon a generalized (and possibly fallacious) internal knowledge.

The Benefits of Using RAG

The RAG has several advantages, in addition to minimizing hallucinations, and offers substantial benefits when combined with an AI strategy.

Improved Accuracy and Reliability

The most significant advantage of RAG is the dramatic improvement in the accuracy of facts. This makes it less likely that the LLM will create stories by sticking to a preferred collection of confirmed documents to base its responses on. This is essential in cases of a business application where misinformation may have a profound impact, as in the cases of customer support, financial reporting, or legal research.

Access to Real-Time Information

Standard LLMs have a limitation on the freshness of the training information. RAG defeats this drawback by linking up to up-to-date knowledge databases, which are live. It indicates that your AI application may respond based on the latest company rules, product options, or market statistics, ensuring that its users always have the most up-to-date and valuable information.

Enhanced Transparency and Trust

Since RAG bases its answers upon definite source documentation, one can refer to the exact data that was applied to create an answer. This openness enables users and administrators to establish the origin of the information, creating a level of trust and an easy audit trail. Should the response be faulty, it would be easier to trace the problem-related data to its origin and rectify it.

Reduced Need for Costly Retraining

Fine-tuning or retraining an LLM on new data is a complex and expensive process. RAG offers a more efficient alternative. To update the AI's knowledge, you need to update the external data source. The LLM itself doesn't need to be retrained, making RAG a more scalable and cost-effective solution for keeping your AI application current.

Final Thoughts

In the case of organizations, it is essential not to fall prey to AI hallucinations. Because of incorrect AI usage, there is a risk of loss of trust and wrong decisions, as well as legal problems. RAG has a robust solution, and it has created AI systems that are intelligent, precise, and trustworthy. RAG makes large language models reliable knowledge workers by grounding them in verifiable facts. One way to use RAG to leverage the power of language models with factual accuracy as you integrate AI is to use RAG.