As AI makes critical decisions in our daily lives, from medical diagnoses to product recommendations, a key question emerges: How can we trust outputs we don't understand? This is the "black box" problem. Explainable AI (XAI) aims to solve this by creating AI models that can explain their reasoning in a way humans can understand.

Understanding the "Black Box" Problem

We must first understand the black box problem to understand the significance of XAI. Most sophisticated AI models are running with deep learning and neural networks are incredibly simple. They may have millions of parameters, which may change automatically, as the model trains on a massive amount of data.

Although such models can attain impressive accuracy in such tasks as image recognition or natural language processing, their logic is typically opaque. The developers themselves, making them, may not be able to trace the specific route an AI followed to reach to a certain conclusion. This non-transparency may be an issue particularly in high stakes situations.

Let a loan be refused by an AI system or a medical picture indicating a severe illness or an autonomous vehicle driven, we want to know why. In the absence of this knowledge, the errors, the bias corrections and accountability of the system becomes hard to determine.

Why Does AI Explainability Matter?

The explanatory urge is not an academic empty exercise but has significant implications in the real life. The central concepts of creating trust and being fair are the foundations of why XAI is emerging as a requirement in different industries.

Building Trust and Confidence

To embrace and trust AI systems, the user must trust the recommendations of the systems. A physician will put more trust in an AI-driven diagnostic system when it is able to point at the particulars of a scan that resulted in its determination. In the same vein, a financial advisor will have a stronger guarantee on the use of an AI to offer its advice on investments when the system can justify the market indicators that it gave consideration. Explainability creates a trusting interface between the human users and machine intelligence.

Identifying and Mitigating Bias

AI models are trained using data, and when that data encodes the current biases in the society, the AI will learn and embrace them. A personnel recruiting tool, informed by past company information, may be biased against some applicants depending on the past representation of that group. XAI can be used to identify these underlying biases by showing which input features affect a model the most. Knowing what made an AI take a biased decision, developers can strive to rectify it and achieve more justified and fair results.

Ensuring Regulatory Compliance and Accountability

With the increased spread of AI, the governments and regulatory entities are developing new regulations to regulate its usage. Laws such as the General Data Protection Regulation (GDPR) provided by the European Union contain a right to explanation, the right to be meaningfully informed about how automated systems are deciding upon him or her.

XAI plays a significant role in ensuring that organizations comply with these legal demands and show that their AI systems are performing in a fair and transparent manner. Explainability is used to determine accountability when something goes wrong, the root of the error is established.

Improving Model Performance

Explainability can also bring about improved performance of the process of making an AI model explainable. The developers are better placed to diagnose the issues in a system and identify the issues when they are able to interpret why a model is making errors. For example, when an image-recognition model mistakenly labels an object, XAI reveals that it is focusing on background features that should have been ignored. This intuition will enable developers to re-train the model using superior data or change its structure in the end making it more precise and valid.

Core Methods of Explainable AI

The methods of obtaining explainability are multiple, and each of them has its advantages and use cases. These may broadly be divided into two broad groups namely methods that are transparent in nature and ones that describe complex models after construction.

Inherently Interpretable Models

Some AI models are relatively simple and transparent by nature. These are often called "white box" models because their internal workings are easy to understand. Examples include:

Decision Trees

These models use a tree-like structure of if-then-else rules to make decisions. You can easily follow the path from the root of the tree to a final decision leaf to understand the logic.

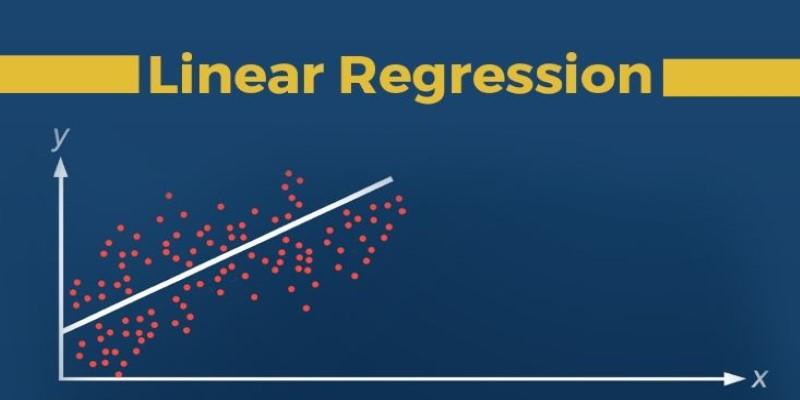

Linear Regression

This statistical method models the relationship between a dependent variable and one or more independent variables. The coefficients assigned to each variable clearly show its influence on the outcome.

While these models are highly interpretable, they may not always achieve the same level of accuracy as more complex models for certain tasks.

Post-Hoc Explanation Techniques

For more complex "black box" models like neural networks, researchers have developed techniques to provide explanations after the model has been trained. These are known as post-hoc methods.

LIME (Local Interpretable Model-agnostic Explanations)

LIME operates by experimenting with the effect of such minimal changes on the prediction of a model on the input data. It basically comes up with an easier to understand model that can be used to describe the workings of the complicated model within a localized and straightforward setting. Image an example It may be used to indicate which of the pixels in an image were the most significant to classify the image as a "cat".

SHAP (SHapley Additive exPlanations)

The SHAP values are based on an idea, which is borrowed by the cooperative game theory, determining the value of each feature to a prediction. It offers a more stable and unified approach of explaining any machine learning model by demonstrating how each of the features are propagating the output of one starting value to the ultimate prediction.

When using these techniques, organizations have an advantage that the complex models can be high performing, and at the same time gain a transparency and understanding layer.

Final Thoughts

Explainability (XAI) is needed as AI systems become more powerful and autonomous. It is now a requirement in responsible AI creating. The focus on transparency produces responsible, equal, and trusting AI. The process to completely explain AI is optimistic. In creating XAI, we make sure that AI is used to benefit humanity and come across in a friendly way. The mission is to lead the human-like innovation, which will establish smart and comprehensible AI.