LLMs are powerful but too big for specific NLP tasks. Notably smaller and specialized models are the efficient solution. The given guide is on the application of ModernBERT to u-classify the text and surmount poor performance on limited data with the assistance of synthetic data ad hoc. Get to know how to refine these workable language models to create an influential text classifier.

Understanding the Tools

Before we build our classifier, let's get familiar with the core components we'll be using: ModernBERT and the concept of synthetic data generation.

What is ModernBERT?

ModernBERT is a new generation of models that offer higher efficiency without noticeably affecting their performance. It is a scaled-down variant of the BERT (Bidirectional Encoder Representations from Transformers) model, meaning that it has been trained to behave similarly to, and much like, a larger and more complex model with a larger footprint.

The main features of the ModernBERT are:

- Reduced Size: It has significantly fewer parameters than models like BERT-base or BERT-large, making it faster to train and easier to deploy.

- High Efficiency: Its smaller size translates to lower computational costs, requiring less GPU memory and processing power.

- Strong Performance: Despite its size, ModernBERT maintains competitive accuracy on a wide range of NLP tasks, including text classification.

The latter characteristics make ModernBERT an ideal option when resources are limited or when it is necessary to deploy the application quickly.

The Power of Synthetic Data

A lack of quality training data labeled is one of the most significant obstacles in machine learning. Gathering data through manual methods using their annotation is laborious and costly. Synthetic data generated proves to be a handsome solution to this issue.

With an impressive generator model, such as a large language model, we can generate new, synthetic data points that give an impression of the original data. In the case of text classification, it corresponds to the production of new instances of text of every category that we wish to classify. This operation may be termed data augmentation, and it works to:

- Increase Dataset Size: A larger dataset can help the model generalize better and prevent overfitting.

- Improve Model Robustness: Exposing the model to a more diverse range of examples makes it more resilient to variations in real-world data.

- Balance Classes: If some classes have fewer examples than others, synthetic data can be generated to balance the dataset, leading to fairer and more accurate predictions.

We will evaluate a generator model for our project to help create artificial samples of text, as it will effectively increase our training set and enhance the performance of our classifier.

Building a Text Classifier: A Step-by-Step Guide

Now, let's walk through the process of creating a text classification model using ModernBERT and synthetic data. Making use of a popular dataset, i.e., the AG News dataset, that classifies news articles based on four categories: the World, Sports, Business, and Sci/Tech.

Step 1: Set Up Your Environment

You must first of all install the required Python libraries. Transformers and datasets of Hugging Face are the defining libraries of this project, and a deep learning framework, such as PyTorch. To install them, run the following command: pip install transformers datasets torch. Furthermore, an appropriate environment needs to be in place, one that includes a repository for a GPU, to accelerate the model training process significantly.

Step 2: Generate Synthetic Data

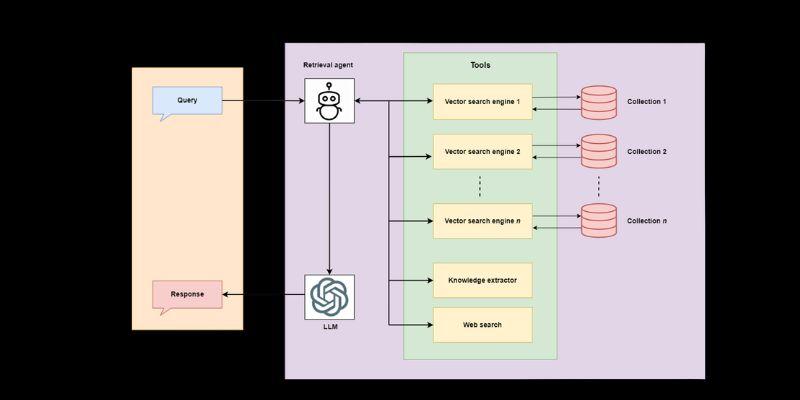

To overcome the possibility of a small amount of data, we will use synthetic examples. A pre-trained LLM could be used to do this. It aims to generate new sentences that fit into our existing news categories.

Let's assume we have a small, initial dataset. We can prompt a generator model to create new examples. For instance, to generate a new "Sports" article snippet, we could use a prompt like:

"Generate a short news headline about a recent soccer match."

The model might return: "City Clinches League Title in Dramatic Final-Day Victory."

By generating hundreds or thousands of such examples for each category, we can significantly augment our training data. It is crucial to review the generated data to ensure its quality and relevance. Poor-quality synthetic data can harm model performance, so a human-in-the-loop approach for validation is often recommended.

Step 3: Load and Prepare the Data

After the generation and curation of the data, it is preprocessed before being passed into the model. Preprocessing could include data cleaning, e.g., eliminating redundancy, correcting vernacular issues, or standardizing some text. There can also be tokenization, lemmatization, or code, depending on the code used. Should the data be ready, it means that learning patterns in the model can occur, making the entire training process much better.

The result of the training phase is directly decreased due to the quality of the preceding preprocessing steps. For example, data inconsistency and tokenization imperfections may result in suboptimal performance and model breakdown. To make the machine learning pipeline strong and ready to deliver credible predictions, it would be correct to implement comprehensive preprocessing plans and audit successfully processed data.

Step 4: Fine-Tune the ModernBERT Model

Fine-tuning Pre-trained model: Fine-tuning. This is to be adapted to a task by training on a task-specific dataset that is smaller in size. In this step, the model builds upon what it already knows about the language and adds additional expertise according to the parameters it developed during pre-training, to perform better in the new task. Examples of fine-tuning typically involve selecting a deep learning rate, batch size, and number of epochs to prevent overfitting and maximize performance on validation samples. The methods of regularization and attention to the training process are significant factors in achieving the best outcomes.

Moreover, fine-tuning largely depends on the dataset used, which significantly influences the model. The information must be of high quality, highly annotated, and miniaturized towards the intended task. An unbalanced dataset can lead to biased predictions, which could have disastrous effects in real-life applications. With appropriate preprocessing, data balancing, and suitable evaluation metrics, practitioners can easily fine-tune ModernBERT to make robust and accurate predictions tailored to a particular application.

Step 5: Evaluate the Model

To evaluate the performance of ModernBERT, it is crucial to use well-defined and task-specific metrics. Standard metrics, such as accuracy, precision, recall, and F1 score, provide valuable insights into how well the model performs on classification tasks. For more complex models or use cases, practitioners may consider metrics like BLEU for language generation or Mean Squared Error (MSE) for regression tasks. Comparing these metrics against baseline models is an effective way to assess whether ModernBERT offers tangible improvements.

Additionally, practitioners should evaluate the model across different data subsets to ensure its robustness and reliability. This includes testing on unseen data, edge cases, and potentially adversarial examples to identify weaknesses or biases in the model. By adopting a comprehensive evaluation strategy, developers can build confidence in the model's ability to generalize and perform well across diverse scenarios, ultimately ensuring its practical usability.

Conclusion

This guide presents a practical approach to building high-performing text classifiers. Achieving efficiency in addressing resource and data challenges makes combining ModernBERT as a lightweight framework with synthetic data generation an efficient approach. This approach facilitates the cost-efficient and rapid deployment of advanced NLP solutions. Smaller is not necessarily smarter in AI; large-scale approaches have the potential to drive personalized, state-of-the-art outcomes. These methods can open new possibilities for your NLP projects and address your business needs, which should be explored.