Artificial Intelligence (AI) is traditionally depicted as a black box, an opaque machine that provides us with the results without disclosing how and why it was able to reach these conclusions. The implication of this metaphor is that AI has hidden methods that cannot be seen or even followed by users and developers. This common belief, however, is becoming untrue. Developments in explainable AI and AI transparency have made a considerable impact in removing the obscurity behind the decision-making of AI. These methods assist stakeholders in knowing the inner workings of such an AI model and the reason behind its output.

Realizing that AI is not actually a black box, but an expertly built system that can, and should, be rendered transparent and demystified is central to developing trust, responsibility, and the ethical implementation of AI. In this article, we consider the importance of AI transparency, explainable AI, and transparent AI systems, and how these notions dispel the myth of AI as an opaque black box.

What Is Transparency in an AI?

AI transparency is the principle and practice of making artificial intelligence systems open and interpretable, enabling stakeholders to understand an AI model's construction, data inputs, algorithms, and decision-making processes. It goes beyond simply providing outputs to unveiling the "how" and "why" behind AI behaviour.

Transparency in AI consists of the provision of rich information on:

- The training and the processing of data used

- Algorithms and architectures of models that generate decision logic

- The context and the setting in which AI functions

Methods of understanding and limiting errors or biases

This democratic transparency enables users, developers, regulators, and auditors to perform a critical inspection of AI systems. This kind of transparent AI system is crucial to build, especially in industries where artificial intelligence choices affect health, money, safety, and legal judgment calls.

Transparency has three cardinal Objectives.

- Explainability: Providing transparent explanations for the reasons why AI makes certain predictions or classifications so that users can know why this decision was made.

- Interpretability: Enhancing their ability to give a model of how it works inside.

- Accountability: Ensuring that creators or operators of AI are capable of being held accountable for their actions and results.

By embedding these principles in AI design, developers ensure that AI ceases to be a baffling "black box" and becomes a system users can trust and understand.

Why Explainable AI is Important

Explainable AI is all about conversing with human beings about how a particular AI mechanism concludes its results. Unlike black-box models, where only results are presented without much context, the explainable AI systems make AI decisions, single and understandable entities.

As another example, an explainable AI model used in healthcare to predict diseases would not only predict but also indicate test results or symptoms that led it to the particular diagnosis. This degree of explanation will also help clinicians in the responsible integration of the AI recommendation with patient care by verifying it.

Explainable AI can help in:

- Identify and address the biases present in AI models as a result of skewed training data.

- Eliminate unwanted model behaviour or drift, and errors.

- Support the meeting of the regulations that require an understandable decision-making process, including GDPR or AI Act in Europe, and testing.

This AI capability to unpack recommendations enhances users' confidence, acceptance, and willingness to trust AI recommendations in critical tasks. This is not an overstatement to claim that explainable AI makes AI a partner that one can rely on.

The difference between AI Transparency and AI Explainability

Despite some overlap, AI transparency and AI explainability concern themselves with different things:

The term AI transparency incorporates a wider scope that aims to disclose the way AI models are formulated, trained, validated, and utilized, i.e., it brings forth the process of the overall AI lifecycle into the limelight and the system architecture.

Explainability is a subset of explainability that focuses on individual AI outputs or decisions, how (& why) a given recommendation or prediction was made in a manner that is explainable to people.

Together, the two offer a comprehensive picture of AI systems, the transcendence in which it offers users a macro-level transparency of how the system functions on top of the micro-level explainability of individual decisions. The two foundational pillars stand out in making the establishment of transparent AI that leads to auditability, trustworthiness, and responsible uses.

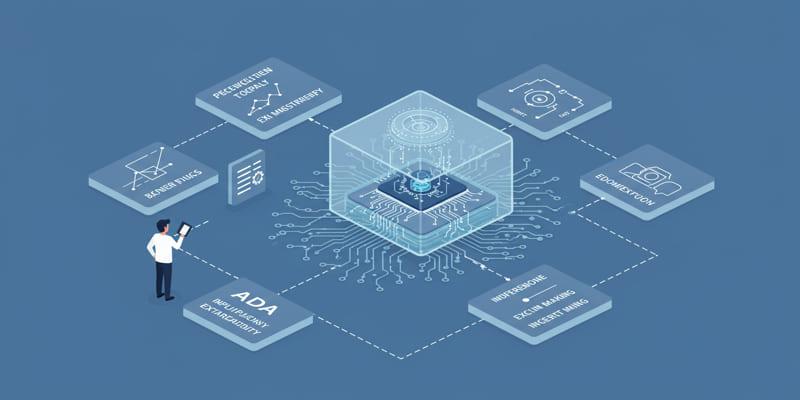

Methods of Creating Transparent AI Systems

The design of transparent AI systems is concerned with the trade-off between performance and interpretability. Sometimes, the simplest models, such as support vector machines, are often the least accurate AI models. Nevertheless, there are a number of measures through which transparency may be implemented without substantial loss:

- Simple Models: Applying models that are inherently interpretable in some way, such as decision trees, linear regressions, or rule systems, is reasonable.

- Post-hoc Explanation: Using tools and algorithms that can explain AI model decisions after those decisions have been made, e.g., SHAP values, LIME, or attention maps.

- Visualizations: The use of graphical displays in terms of how various features affect the output of AI.

- Documentation: Keeping track of records regarding data origin, model version, and decision rules.

- Continuous Monitoring: This involves the pursuit of constant monitoring of the model behavior so as to identify anomalies or biases as they occur.

Implementation of such methods would serve to narrow the divide between the current complex AI processes and the realities of a simpler, more explainable, and accountable system.

Practical Uses that Embrace Transparency and Explainability

Companies across industries are applying explainable and transparent AI to ensure ethical use and regulatory adherence in this space:

- Healthcare: AI systems are used to support healthcare image processing and diagnostics with explanations that clinicians are capable of confirming and trusting.

- Finance: Banks can further adopt transparent AI models to assess credit risks and detect fraud with respect to fairness and be inspectable by regulators.

- Legal: AI helps in case research and sentencing by listing the factors guiding the recommendations, so that a check can be made to rule out bias in the said recommendation.

- Automotive: In the case of autonomous vehicles, interpretability is needed to evaluate the safety-critical decisions performed by algorithms.

- Customer Service: Having a recommendation engine and chatbots will provide a justification of suggestions, which changes user satisfaction.

These illustrations show that AI systems are changing to be clearer, human-friendly, and controlled machines.

Conclusion

A complete AI system, not only in regard to risks but also in relation to inefficiencies, is underway and taking a positive direction. The further development of theories and practices of interpretability, regulatory frameworks requiring transparency, and the growth of demand among people to deal with accountable AI are guarantees of consistent improvements.

By pursuing AI transparency, explainable AI, transparent AI systems, we will create an AI-driven future where innovation will balance with ethics, transparency and societal trust.