In recent years, the rapid advancement of smart programs has created a powerful sense of limitless possibility. Systems can generate photorealistic images, write compelling code, and instantly summarize massive documents. Yet, behind the impressive facade of capability, there are profound, fundamental limits to what this technology can achieve.

Understanding what advanced computational systems cannot do is vital. It sets realistic expectations, prevents misuse in high-stakes environments, and clearly defines the irreplaceable value of human intellect and consciousness. The key limitations are not temporary technical glitches; they are rooted in the very structure of the systems themselves, which rely on statistics and pattern matching rather than true understanding.

Lack of True Understanding and Common Sense

Despite processing language and images with fluency, these systems operate purely on statistical patterns. They lack true semantic understanding, meaning they do not grasp the "why" or the conceptual meaning behind the data.

The Statistical Parrot

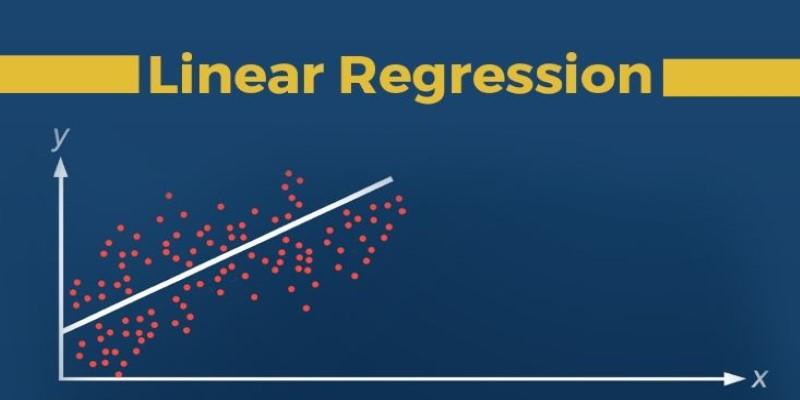

Large language models (LLMs) and other smart tools are excellent predictors of the next most probable word or pixel based on their training data.

Pattern Matching vs. Semantics: If you ask a program to complete the sentence, "The fire was so hot that the floor began to..." the system will predictably choose "melt" or "burn" because those are the most statistically frequent and logical pairings in its training data. However, the system does not understand the physical properties of fire, heat, or melting. It is a highly sophisticated predictor, often called a "statistical parrot," capable of mimicking coherence without comprehending meaning.

The Common Sense Problem: Human intelligence relies on an expansive library of unspoken, common-sense knowledge (e.g., "A dog is larger than an ant," or "If you drop a cup, it will break"). Computational systems struggle profoundly with this because common sense is rarely explicitly stated in the data. When faced with a novel scenario outside its learned context, the system can produce highly illogical or nonsensical outputs.

Hallucination and Confabulation

When a system provides a highly confident, yet completely false, answer, it is often called a "hallucination".

Fabricating Facts: Since the system's goal is statistical coherence, not factual truth, it can generate persuasive-sounding but totally incorrect citations, facts, or pieces of code. This is a fundamental risk in fields like law and medicine, where factual accuracy is non-negotiable.

Inability to Self-Improve Beyond Data

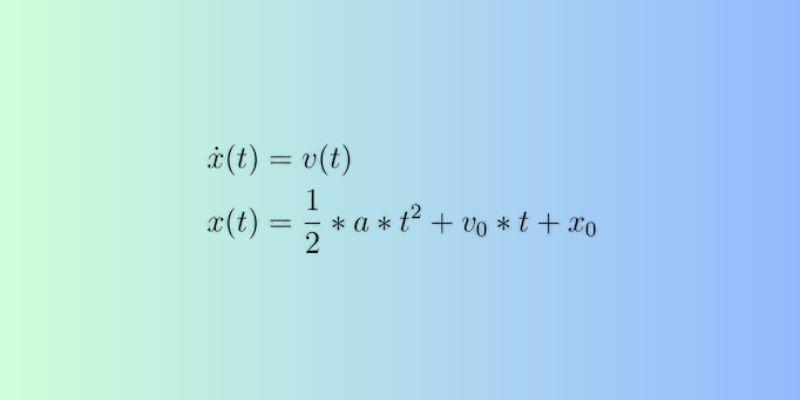

Computational systems are fundamentally limited by the data they are trained on. They cannot autonomously generate truly novel knowledge or adapt to radically new environments.

The Data Dependence Wall

The capability of any system is capped by the quality, volume, and scope of its training dataset.

Brittleness and Bias: If a system trained on images of dogs is suddenly shown a new, unrecognized animal, it cannot generalize its knowledge; it simply fails to classify it. Furthermore, if the training data contains historical biases (e.g., skewed hiring data), the system will amplify and perpetuate that bias in its predictions, making it unreliable and unfair. The system cannot critique or correct its own flawed data; humans must intervene to clean and audit the input.

No True Creativity (Transformational Novelty): As established in previous discussions, systems excel at combining existing ideas in new ways (combinational creativity). However, they cannot achieve transformational creativity—the ability to invent an entirely new artistic paradigm or reject the very rules they were trained on. They lack the conscious motivation to break the conventions they were built upon.

Lack of Embodiment and Real-World Experience

Computational systems lack physical interaction with the real world, a crucial component of human learning and problem-solving.

No Lived Experience: A system can read every book ever written about gravity, but it cannot know what it feels like to drop a ball. This lack of "embodiment" fundamentally limits its ability to understand causality, safety, and physical constraint, making autonomous decisions in complex, real-world environments difficult and risky.

Absence of Human Qualities: Ethics and Consciousness

The most profound and unbridgeable limits are the non-technical, philosophical qualities that define human intelligence.

Consciousness and Intent

Computational systems lack the fundamental subjective experience that drives human action.

No Self-Awareness: The system has no beliefs, no desires, no goals, and no sense of "self". It is simply executing code and mathematical optimization. Without consciousness, it cannot possess genuine intent or emotional context.

No Moral Compass: The system does not understand or internalize concepts of right and wrong, suffering, or fairness. Any "ethical decision" the system makes (e.g., in a self-driving car accident scenario) is simply the execution of a set of pre-programmed, human-defined rules. If the programming is flawed, the ethical outcome will be flawed, and the machine cannot recognize the moral lapse.

The Need for Human Judgment

Ultimately, the most complex and high-stakes decisions require the unique blend of human qualities.

Empathy and Nuance: In fields like therapy, education, or law, judgment requires empathy, understanding non-verbal cues, and navigating unique human situations for which there is no statistical precedent. These deeply human skills cannot be automated.

Final Responsibility: Because computational systems lack consciousness and intent, they cannot assume legal or ethical liability. The ultimate responsibility for any decision made by a system—from a medical diagnosis to a legal argument—must always remain with the human professional who oversees, validates, and deploys the tool.

Conclusion

Despite how incredibly fast and clever they are, advanced computational systems are ultimately limited because they are just statistical machines. They can't truly grasp the meaning of things, lack basic common sense, and can't figure out new ideas outside the examples they were trained on. Most importantly, they don't have the core human qualities: consciousness, a sense of right and wrong, or real feelings.

Knowing where the line is drawn is absolutely essential for the future: the machine handles the speed, the analysis, and the pattern spotting, while the human worker holds onto the jobs only we can do—coming up with creative ideas, making ethical decisions, and applying nuanced, real-world judgment. The computer is a powerful tool, but the human mind remains the one in charge.